For more than a year I have been building a little farm of machines. They run a series of programs I wrote for the procedural generation. They work in parallel, sometimes doing the same task over different locations of the virtual world, sometimes running very different tasks one from another. Their efforts are highly coordinated. Thanks to this I can get large portions of terrain, forests and buildings generated in very little time. I can see the results of the changes I do to the world definition without having to wait too long.

What it is best, this setup allows me to throw more nodes in the network at any time. I only have three decent machines in the farm right now. They are old gaming rigs I found on Kijiji around Montreal. The minimum spec is 8 Gigs RAM, 3 or 4 cores and an ATI video card better o equal to a 4770. I need them to have GPUs because some algorithms use OpenCL. I cannot afford to get too many of them right now, but having software that scales over multiple systems is already saving me time.

What I like the most is that it really feels like a single very powerful machine. The existence of the farm is completely transparent to the application I use to design virtual worlds. I will cover this application in a future post, you have already seen many screenshots taken out of it without knowing.

I would like to introduce you to some different animals I keep in this farm and explain a little about what they do.

Dispatcher

The dispatcher controls everything that happens in the farm. There is a single instance of this process for the entire farm. At any time the dispatcher keeps tracks of the different jobs currently active. Each job may be at a different stage. The dispatcher knows to which farm worker should direct the next request. All the coordination happens over TCP/IP. The dispatcher listens on two different ports. One is for the farm workers to report their progress and get new work assignments. The other one is so clients of the farm can request new jobs and also query the status of ongoing jobs.

Contour

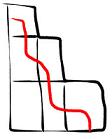

Several layers make the virtual world. Some are terrain layers, some vegetation, some are buildings and roads. All these layers have something in common, they represent a volume with an inside and an outside. Contouring is the process that allows to find the surface that divides the inside from the outside. The world is broken into many Octree cells. Each contour worker can process a cell individually. It knows which layers intersect the cell so it runs an algorithm known as Dual Contouring on the contents of the cell. The result is a very detail polygonal mesh.

Several layers make the virtual world. Some are terrain layers, some vegetation, some are buildings and roads. All these layers have something in common, they represent a volume with an inside and an outside. Contouring is the process that allows to find the surface that divides the inside from the outside. The world is broken into many Octree cells. Each contour worker can process a cell individually. It knows which layers intersect the cell so it runs an algorithm known as Dual Contouring on the contents of the cell. The result is a very detail polygonal mesh.Decimate

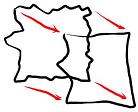

The meshes produced by the contour phase are very dense. If they were fed to the next processing stages it would slow them down. For this reason they go through a phase of decimation. This is a fast Multi-Choice mesh optimization that preserves topology, and only removes those triangles that bring very little difference to the mesh. The resulting mesh is very close to the original, but the number of triangles is drastically reduced..

The meshes produced by the contour phase are very dense. If they were fed to the next processing stages it would slow them down. For this reason they go through a phase of decimation. This is a fast Multi-Choice mesh optimization that preserves topology, and only removes those triangles that bring very little difference to the mesh. The resulting mesh is very close to the original, but the number of triangles is drastically reduced..Reduce

I use a LOD system to replace several distant small cells by a larger cell. Since they are Octree cells, this means combining eight children cells into one large parent cell. Even if it covers eight times the space, the parent cell must be similar in byte-size than the child cells. This means the eight children must be brought together and compressed. The compression at this phase does change the mesh topology, otherwise it would be impossible to achieve the target sizes. Then the resulting parent cells are again combined into a larger parent cell and so on, until the highest LOD cells are obtained.

I use a LOD system to replace several distant small cells by a larger cell. Since they are Octree cells, this means combining eight children cells into one large parent cell. Even if it covers eight times the space, the parent cell must be similar in byte-size than the child cells. This means the eight children must be brought together and compressed. The compression at this phase does change the mesh topology, otherwise it would be impossible to achieve the target sizes. Then the resulting parent cells are again combined into a larger parent cell and so on, until the highest LOD cells are obtained.Project

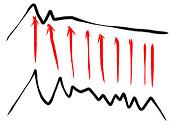

This process takes a high resolution mesh from the decimate or reduce phases and creates a very simplified mesh out of it. Then it projects the excess geometry on a normal map. The results are compressed as I described before and stored in a cell definition file. These are the files that are sent to the client for rendering. At this point the processing for a single cell is pretty much done.

This process takes a high resolution mesh from the decimate or reduce phases and creates a very simplified mesh out of it. Then it projects the excess geometry on a normal map. The results are compressed as I described before and stored in a cell definition file. These are the files that are sent to the client for rendering. At this point the processing for a single cell is pretty much done.I have not covered here the generation of cities, architecture, forests and other elements. They blend into this sequence and also live in the farm, but I think they deserve a dedicated post.

Probably the most interesting aspect of writing a collective of programs like this was how to make it reliable. Since I was targeting unreliable hardware to begin with, I realized failure had to be an integral part of the design. I devised a system where none of these processes expects you to do proper shutdown on them. They could just vaporize at any point. Actually I did not implement a way for them to exit gracefully. When one needs to close, the process is simply killed. The collective has to be resilient enough so no data corruption arises from such a failure.

Have you looked at ZeroMQ? For this sort of messaging-passing architecture, it's a godsend. Obviously you already have a working solution, but if you do a rewrite, ZeroMQ may be able to save you a lot of time.

ReplyDelete@Justin: ZeroMQ is alright, but I don't really have any problem that cannot be solved with traditional sockets. You would be surprised about how little code in my solution deals with the messaging transport. I would use a library only if it does something I don't know how to do, or that I cannot learn how to do. Even then I would loose sleep over the fear of ending with a dead horse attached to my carriage.

ReplyDeleteVery interesting topic! I love it. Something I've been struggling with a lot myself recently has been how to generate the surface mesh and be able to tell the 'contour' system to generate parts of the mesh as smooth / rounded and parts as sharp. Example: A flat voxel surface with only a few voxels above the surface. Is it going to be rendered as a smooth mound or a small cliff face? How do you determine what's a cliff, and what's a rolling hill?

ReplyDelete@Scott: It is all in the contouring method you choose. If it recovers sharp features then it can also do smooth surfaces. Do not use the classic Marching Cubes for instance. Everything out of it looks like a blob. Extended Marching Cubes can do sharp features but it is a lot of work for nothing. The method I use is Dual Contouring. You can search this blog for those terms and you will find a post with some useful links, some have working source code.

ReplyDeleteOnce your contouring can reproduce sharp edges, any sharp features in the implicit function you use will produce meshes with clean sharp edges.

nice :)

ReplyDeleteYou're doing so much more than 'just' creating a virtual world.

ReplyDeleteWhat you're doing is just fabulous!

That is really amazing! It is incredible the amount of time and energy that you have put into this... which is only surpassed by the quality of the resulting product.

ReplyDeleteI really want to know why are you are doing this? For the LOLs? To get a job somewhere? For a patent? What is driving you to do this?

@dehaul: Thanks, but don't be fooled by the shinny stuff. I have not spent much time or energy on this. I have been reguritating the same algorithms for a long time. If you check the very first video I posted in this blog you will see pretty much everything was already there.

ReplyDeleteMy goal is to create a game to showcase this system, sell it and hopefully license the technology. But I'm progressing so slowly any novelty in what I do will be gone by then.

Cool, but wait, why 8 Gigabytes of Ram just for minimum requirement?

ReplyDeleteBeing this procedural I'd have said much of the burden lied onto cpu cicles.

Couldn't be there a system that swaps according to the moment the advantages of storaging and the sparing of procedurals, maybe using reference points like polygon and across them, something like a voxel approximation of a nurbs (a 3d bezier curve also called spline), in place of the texures different leves of noises an displacement + details "tissue crease" effects, etc. to simulate mountains or water and other curves and effect depenting on what you wish.

Just a thought, because this blog hooked me.

bye and thanks.

Antome (couldn't log with yahoo)

8 GB RAM is the minimum requirement for the machines I'm selecting for the farm. This does not mean a farm node cannot run on 4 GB for instance.

DeleteThat being said, memory is cheaper than time. Making a solution slower just to save memory does not make sense to me. Time is money.

In a farm node, most of the RAM is taken by the contouring process. My focus was speed, so I had to make sure default memory management would not get in the way. Even if the contouring is done adaptively over an octree, I assume the worst case scenario from the onset. I wrote the memory management logic to be aligned with the octree and the contouring algoritm that runs using OpenCL. In most cases a large percentage of the reserved memory is not used, but when bad cases appear, the system is able to deal with them without a hitch. This is of course at the expense of having a large pool of memory locked all the time. Again, since my top concern is speed, it pays.

I love this topic and I wish I could spend some time on it.

It is common for programmers to adopt a wishful thinking stance when it comes to memory management, a garbage collector being the worst case, then we are surprised when our solution is performing poorly due to unpredictable hikes from memory fragmentation, swapping and thrashing. Memory management is an integral part of any algorithm. In many cases you can afford not to care, a garbage collector will do great. In other cases it will be the core of everything you do.

Awesome reply, thanks.

DeleteWell, I read this is a farm so a voxel render farm, not like Pixar render farm, but to bake complex procedural graphic solutions via parameters, getting the most natural graphic results, right? So the realtime engine-editor would be a separate subject, then it would make sense to "compress" (like most realtime poly engine do via poligons)using a mix of procedural and raster graphic. Example, you use something that would usually be a handful of polygon tiles, then along this reference you generate the voxel (also just heightmapped) approximation of convex and concave spline, to generate a soft mound, you don't have to store so much voxel mode because they get rotated and affine distorted to blend together. Still in an octree or something similar. That is just an idea, I admit I didn't code much more than a battleship with ship instancing, lol :D. But i pictured that simple procedural solution can be used in realtime, I think at how fast were the Unreal procedural water back in '98 or the displacement mapping now (though lame at times).

I agree about wishful thinking, by garbage collector you mean something along the line of swapping the same memory location for, like, different nodes, when others are far away or compressed, or view portals or Pvs?

And about memory fragmentation you mean by un unfragmented having all nodes in order of position, allocated in memory close together, but at the cost of not being able to move them real time?

Antome

Note I never store voxels. Currently I have three different entities in the procedural world: terrain, trees and architecture. Each one is represented in very memory efficient forms. The contouring phase takes these abstract definitions and outputs a high detail polygonal surface, which is later compressed for realtime rendering.

DeleteBy garbage collector I meant something like Java's garbage collector.

Memory fragmentation is a nasty effect found in default heap memory managers. If a program runs for a very long time and performs a lot of memory allocation for blocks of different sizes, even if there are no leaks in the code, you will end of with a lot if smaller sized available blocks. Even if they add up to a lot of memory, a large block cannot be allocated anymore. This is similar to what happens in hard drives after you have deleted and created many files. You need to run a defrag tool so your HD is fast again. With memory it is worse, instead of running slower memory allocation just fails despite the fact there is enough memory.

Thanks again for your time. So the farm auto edits "levels" via parameters. What's the realtime engine instead? You said you run it at 60fps. The compression is good at LODing the geometry, but so it's not stored as voxel, if by voxel you mean a "[x][y][z]" matrix, what do you use instead. While lodding is good to streamlin the contour of massive surface to sum the noise, how about grass, grain fields an other such as fine and spiky details? Crysis is good at instancing millions of them just as a visual effect, just to say, even if the actual geometry of the land is actually low res and dull.

DeleteI have now present the big hassle that fragmentation could cause, two pieces of data have free space in between then, suddenly you need to allocate some space but it's bigger than the free space available between all of them. The ideal is to have safe big chunks of space allocated, and simply swap the objects the same space is representing, but I'm not sure. Is this a problem with octrees more than with uncompressed space? Hey you really got me to think more about this issue about my possible idea, thanks.

Antome

For realtime is just polygons. Grass, flowers and foliage uses instanced billboards. Small details like pebbles and little cracks is in the textures. You can see severals posts I did on those topics at the end of 2010.

DeleteSorry, I'm italian, I meant seafight, not battle ship.

ReplyDeleteAnd obviously by distorting these voxel maps of curves the polygon is used as a reference, maybe some materials can be generated along these surfacese, like brick or wood planks.

Antome

One of the benefits I see in voxel data is that it is deform-able/malleable in the game. Judging by this post, it seems you have sacrificed that aspect for speed?

ReplyDeleteIf that is the case what benefit are you getting out of voxels?

Also, I was under the impression that dual-contouring be done in real time, why do you need a render farm?

Correct, I do not care much about players modifying the game world at this point. The goal of this project iteration is to have good looking content without requiring much effort from game designers and artists.

DeleteThe benefits of voxels still apply in this context, only for the game maker. The main advantage they bring is you can easily combine layers of data from very different algorithm and still obtain clean watertight meshes.

Dual contouring is quite fast and could be performed in real time. The actual procedural generation is what takes time, of course depending on how much detail you want. If you are doing many layers of terrain, forests and intricate cities, and you want to have several kilometers into the horizon, the current generation of hardware cannot cope.

I guess I didn't understand the sense of scale you are creating.

DeleteI might suggest a few posts with something along the lines of a world map, it would really help illustrate the sheer level of detail you are creating. (perhaps you've done so already? I haven't gone through all the posts yet)

Are you doing any culling of the meshes generated by multi-layered volumes when parts get occluded?

ReplyDelete